- java.lang.Object

-

- de.jstacs.algorithms.optimization.DifferentiableFunction

-

- de.jstacs.classifiers.differentiableSequenceScoreBased.OptimizableFunction

-

- de.jstacs.classifiers.differentiableSequenceScoreBased.AbstractOptimizableFunction

-

- de.jstacs.classifiers.differentiableSequenceScoreBased.AbstractMultiThreadedOptimizableFunction

-

- de.jstacs.classifiers.differentiableSequenceScoreBased.DiffSSBasedOptimizableFunction

-

- de.jstacs.classifiers.differentiableSequenceScoreBased.gendismix.LogGenDisMixFunction

-

- de.jstacs.classifiers.differentiableSequenceScoreBased.gendismix.OneDataSetLogGenDisMixFunction

-

- All Implemented Interfaces:

- Function, MultiThreadedFunction

public class OneDataSetLogGenDisMixFunction extends LogGenDisMixFunction

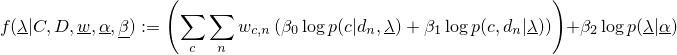

This class implements the the following function is the weight for sequence

is the weight for sequence  and class

and class  .

The weights

.

The weights  have to sum to 1. For special weights the optimization turns out to be

well known

have to sum to 1. For special weights the optimization turns out to be

well known

- if the weights are (0,1,0), one obtains maximum likelihood,

- if the weights are (0,0.5,0.5), one obtains maximum a posteriori,

- if the weights are (1,0,0), one obtains maximum conditional likelihood,

- if the weights are (0.5,0,0.5), one obtains maximum supervised posterior,

- if the

=0, one obtains the generative-discriminative trade-off,

=0, one obtains the generative-discriminative trade-off,

- if the

=0.5, one obtains the penalized generative-discriminative trade-off.

=0.5, one obtains the penalized generative-discriminative trade-off.

It can be used to maximize the parameters. This can also be done withLogGenDisMixFunction. However, we implemented this class to allow a faster function and gradient evaluation leading to a faster optimization. This becomes especially interesting if the number of classes increases.

This class enables the user to exploit all CPUs of the computer by using threads. The number of compute threads can be determined in the constructor.

It is very important for this class that theDifferentiableSequenceScore.clone()method works correctly, since each thread works on its own clones.- Author:

- Jens Keilwagen

-

-

Nested Class Summary

-

Nested classes/interfaces inherited from class de.jstacs.classifiers.differentiableSequenceScoreBased.OptimizableFunction

OptimizableFunction.KindOfParameter

-

-

Field Summary

-

Fields inherited from class de.jstacs.classifiers.differentiableSequenceScoreBased.gendismix.LogGenDisMixFunction

beta, cllGrad, helpArray, llGrad, prGrad

-

Fields inherited from class de.jstacs.classifiers.differentiableSequenceScoreBased.DiffSSBasedOptimizableFunction

dList, iList, prior, score, shortcut

-

Fields inherited from class de.jstacs.classifiers.differentiableSequenceScoreBased.AbstractMultiThreadedOptimizableFunction

params, worker

-

Fields inherited from class de.jstacs.classifiers.differentiableSequenceScoreBased.AbstractOptimizableFunction

cl, clazz, data, freeParams, logClazz, norm, sum, weights

-

-

Constructor Summary

Constructors Constructor and Description OneDataSetLogGenDisMixFunction(int threads, DifferentiableSequenceScore[] score, DataSet data, double[][] weights, LogPrior prior, double[] beta, boolean norm, boolean freeParams)The constructor for creating an instance that can be used in anOptimizer.

-

Method Summary

All Methods Instance Methods Concrete Methods Modifier and Type Method and Description protected voidevaluateFunction(int index, int startClass, int startSeq, int endClass, int endSeq)This method evaluates the function for a part of the data.protected voidevaluateGradientOfFunction(int index, int startClass, int startSeq, int endClass, int endSeq)This method evaluates the gradient of the function for a part of the data.DataSet[]getData()Returns the data for each class used in thisOptimizableFunction.voidsetDataAndWeights(DataSet[] data, double[][] weights)This method sets the data set and the sequence weights to be used.-

Methods inherited from class de.jstacs.classifiers.differentiableSequenceScoreBased.gendismix.LogGenDisMixFunction

joinFunction, joinGradients, reset

-

Methods inherited from class de.jstacs.classifiers.differentiableSequenceScoreBased.DiffSSBasedOptimizableFunction

addTermToClassParameter, getClassParams, getDimensionOfScope, getParameters, reset, setParams, setThreadIndependentParameters

-

Methods inherited from class de.jstacs.classifiers.differentiableSequenceScoreBased.AbstractMultiThreadedOptimizableFunction

evaluateFunction, evaluateGradientOfFunction, getNumberOfAvailableProcessors, getNumberOfThreads, prepareThreads, setParams, stopThreads

-

Methods inherited from class de.jstacs.classifiers.differentiableSequenceScoreBased.AbstractOptimizableFunction

getParameters, getSequenceWeights

-

Methods inherited from class de.jstacs.algorithms.optimization.DifferentiableFunction

findOneDimensionalMin

-

-

-

-

Constructor Detail

-

OneDataSetLogGenDisMixFunction

public OneDataSetLogGenDisMixFunction(int threads, DifferentiableSequenceScore[] score, DataSet data, double[][] weights, LogPrior prior, double[] beta, boolean norm, boolean freeParams) throws IllegalArgumentExceptionThe constructor for creating an instance that can be used in anOptimizer.- Parameters:

threads- the number of threads used for evaluating the function and determining the gradient of the functionscore- an array containing theDifferentiableSequenceScores that are used for determining the sequences scores; if the weightbeta[LearningPrinciple.LIKELIHOOD_INDEX]is positive all elements ofscorehave to beDifferentiableStatisticalModeldata- the array ofDataSets containing the data that is needed to evaluate the functionweights- the weights for eachSequencein eachDataSetofdataprior- the prior that is used for learning the parametersbeta- the beta-weights for the three terms of the learning principlenorm- the switch for using the normalization (division by the number of sequences)freeParams- the switch for using only the free parameters- Throws:

IllegalArgumentException- if the number of threads is not positive, the number of classes or the dimension of the weights is not correct

-

-

Method Detail

-

setDataAndWeights

public void setDataAndWeights(DataSet[] data, double[][] weights) throws IllegalArgumentException

Description copied from class:OptimizableFunctionThis method sets the data set and the sequence weights to be used. It also allows to do further preparation for the computation on this data.- Overrides:

setDataAndWeightsin classAbstractMultiThreadedOptimizableFunction- Parameters:

data- the data setsweights- the sequence weights for each sequence in each data set- Throws:

IllegalArgumentException- if the data or the weights can not be used

-

getData

public DataSet[] getData()

Description copied from class:OptimizableFunctionReturns the data for each class used in thisOptimizableFunction.- Overrides:

getDatain classAbstractOptimizableFunction- Returns:

- the data for each class

- See Also:

OptimizableFunction.getSequenceWeights()

-

evaluateGradientOfFunction

protected void evaluateGradientOfFunction(int index, int startClass, int startSeq, int endClass, int endSeq)Description copied from class:AbstractMultiThreadedOptimizableFunctionThis method evaluates the gradient of the function for a part of the data.- Overrides:

evaluateGradientOfFunctionin classLogGenDisMixFunction- Parameters:

index- the index of the partstartClass- the index of the start classstartSeq- the index of the start sequenceendClass- the index of the end class (inclusive)endSeq- the index of the end sequence (exclusive)

-

evaluateFunction

protected void evaluateFunction(int index, int startClass, int startSeq, int endClass, int endSeq) throws EvaluationExceptionDescription copied from class:AbstractMultiThreadedOptimizableFunctionThis method evaluates the function for a part of the data.- Overrides:

evaluateFunctionin classLogGenDisMixFunction- Parameters:

index- the index of the partstartClass- the index of the start classstartSeq- the index of the start sequenceendClass- the index of the end class (inclusive)endSeq- the index of the end sequence (exclusive)- Throws:

EvaluationException- if the gradient could not be evaluated properly

-

-